AI Strategy Needs an Operating Model, Not Demo Energy

A practical CTO operating model for turning AI excitement into owned workflows, human checkpoints, and measurable business leverage.

AI Strategy Needs an Operating Model, Not Demo Energy

Most companies do not fail at AI because the tools are weak. They fail because a good demo gets promoted into strategy.

That pattern shows up fast. A team watches an agent build a feature, summarize a meeting, or generate a sales follow-up. The room gets excited. Someone says the company needs to become AI-first. Budgets move before anyone defines ownership, risk, or where the work belongs.

AI can create leverage across engineering, product, support, ops, and sales. The mistake is treating that leverage like a software purchase instead of an operating model change.

What Leaders Get Wrong

The first mistake is measuring adoption by tool usage. Seat counts, prompt libraries, and Slack excitement do not prove the company works faster. They prove people experimented.

The second mistake is letting every department invent its own AI process. Engineering asks agents to write code. Support asks them to draft customer replies. Product asks them to summarize feedback. Sales asks them to write follow-ups. Without shared rules, each team creates a different risk profile and engineering becomes the cleanup crew.

The fix is not more governance theater. The fix is a small operating model that tells every team how AI work moves from idea to production.

The AI Operating Model

1. Sort work by risk, not department

Low-risk AI work can move fast: summarizing calls, drafting internal notes, turning long-form content into distribution drafts, or creating first-pass support macros.

Higher-risk work needs review: anything touching customer data, billing, permissions, security, production code, public claims, or regulated workflows.

This keeps support, product, ops, and sales moving while protecting the systems that can hurt the company.

2. Assign one owner per workflow

Every AI workflow needs a human owner. Not a tool owner. A workflow owner.

The owner answers four questions: what input starts the workflow, what output is acceptable, who reviews it, and what happens when it fails.

If nobody can answer those questions, the workflow is still an experiment.

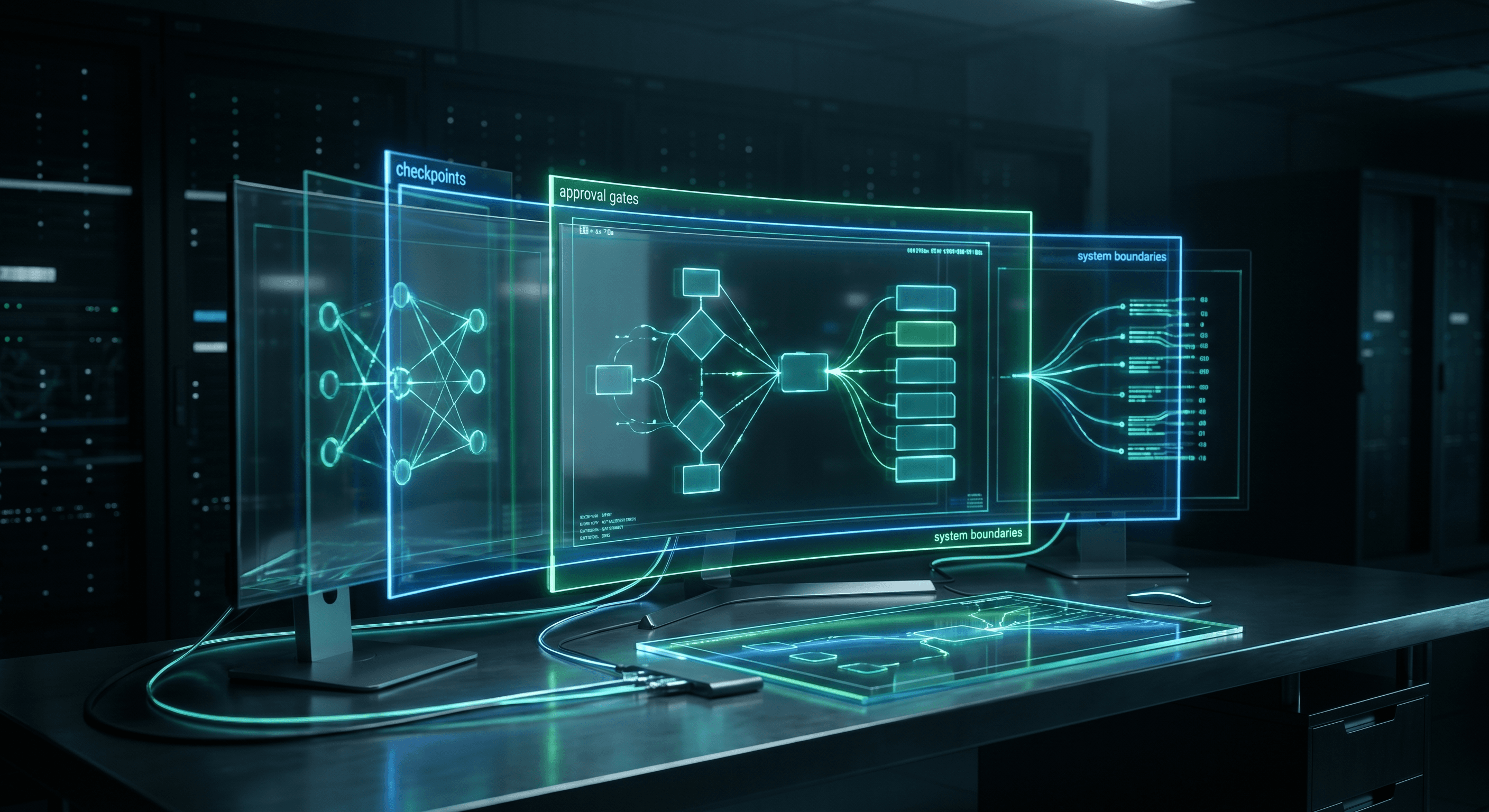

3. Add checkpoints before autonomy

Autonomy sounds efficient until the agent touches the wrong customer, ships the wrong change, or invents a policy. Start with checkpoints.

Let the agent draft. Let a human approve. Let the system log the action. When the workflow proves stable, remove one checkpoint at a time.

4. Measure saved loops

Do not measure AI by how impressive the output looks. Measure the loops it removes.

A useful workflow reduces support triage time, shortens PR review prep, speeds up sales follow-up, improves handoffs from product to engineering, or catches operational issues before a person has to search for them.

That is what a CTO can defend to a founder or board.

The Skill File

This is the lightweight skill file I would give a leadership team before rolling AI across departments.

# AI Operating Model Classifier

## Mission

Classify proposed AI workflows by business value, risk, owner, checkpoints, and rollout path.

## Required Input

- department requesting the workflow

- current manual process

- data touched by the workflow

- user or customer impact

- expected output

- human owner

- review requirement

## Risk Levels

Low:

- internal summaries

- first drafts

- formatting

- research collection

- non-customer-facing analysis

Medium:

- customer support drafts

- sales follow-ups

- product requirements

- internal automation that affects team workflows

High:

- production code changes

- billing or permissions

- customer data updates

- security decisions

- public claims or regulated content

## Rollout Rules

For each workflow, return:

1. risk level

2. owner

3. approval checkpoint

4. logging requirement

5. rollback path

6. success metric

7. what must stay human

## Stop Conditions

Pause when:

- no owner exists

- customer data is involved without review

- the workflow changes permissions, billing, or production behavior

- success cannot be measured

- failure would be invisible to the customer or team

The file is short because leaders will use it. A five-page policy dies in a shared drive. A one-page classifier can sit inside onboarding, support playbooks, product workflows, and engineering agent instructions.

A Pattern From Real Teams

Across multiple companies, the best AI adoption wins have come from narrow workflows with clear owners. A support lead trims ticket triage. A product manager turns call notes into better specs. An engineering manager uses agents to prep PR context. Ops watches known failure paths and escalates only when something needs a person.

None of that requires the company to pretend AI can run the business. It requires leadership to decide where AI belongs, where humans keep authority, and how each workflow proves it saved time.

The modern CTO job is not telling everyone to use AI. It is building the operating model that lets the whole company use AI without turning engineering into the repair department.

Get the Full AI Operating Model Classifier

I posted the full AI operating model setup on LinkedIn, including the risk levels, workflow owner checklist, checkpoint rules, and rollout classifier. Comment "Guide" on that post and I'll send the classifier by DM.

Work With Me

I help engineering orgs adopt AI across their entire team - not just the code, but how product, support, and operations work too. If you want your org moving faster without growing headcount, let's talk.

Kris Chase

@krisrchase